{ Explore | Examine | Expose | Explain } your model with the explabox!

Developed to meet the practical machine learning (ML) auditing requirements of the Netherlands National Police, explabox is an open-source Python toolkit for the complete ML auditing lifecycle. It implements a standardized four-step workflow—Explore, Examine, Explain, and Expose—to produce reproducible and holistic evaluations of text-based models.

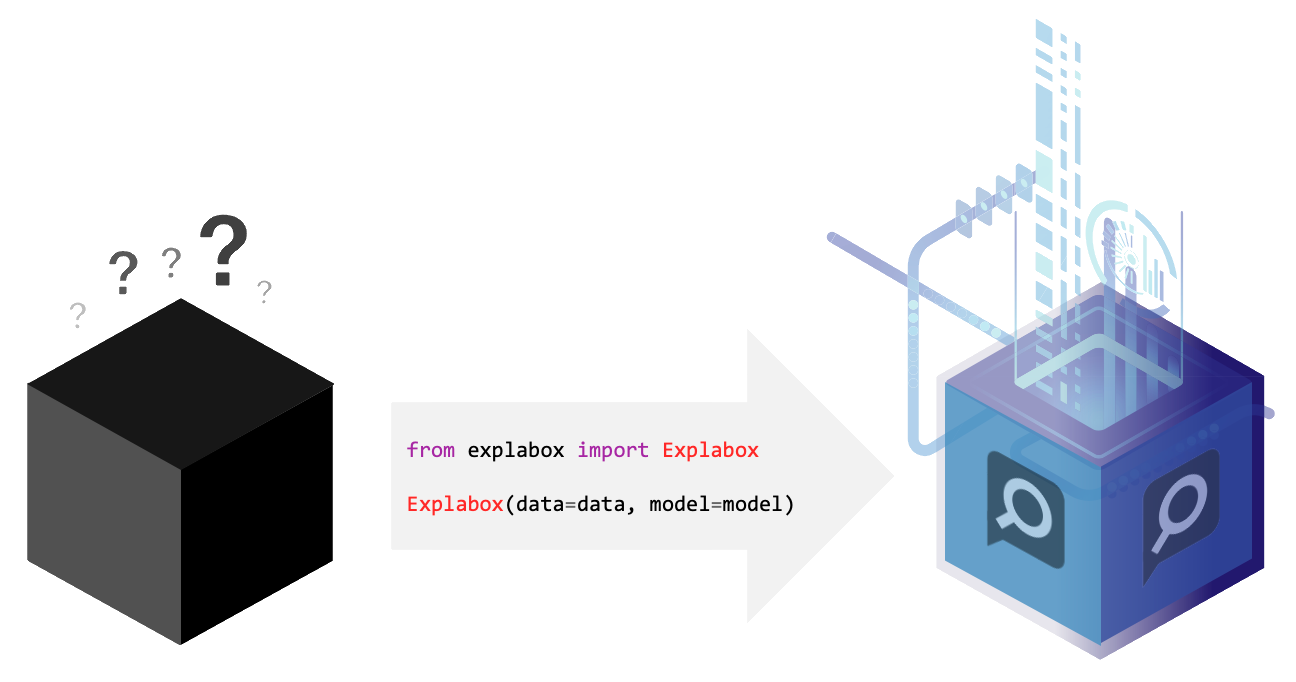

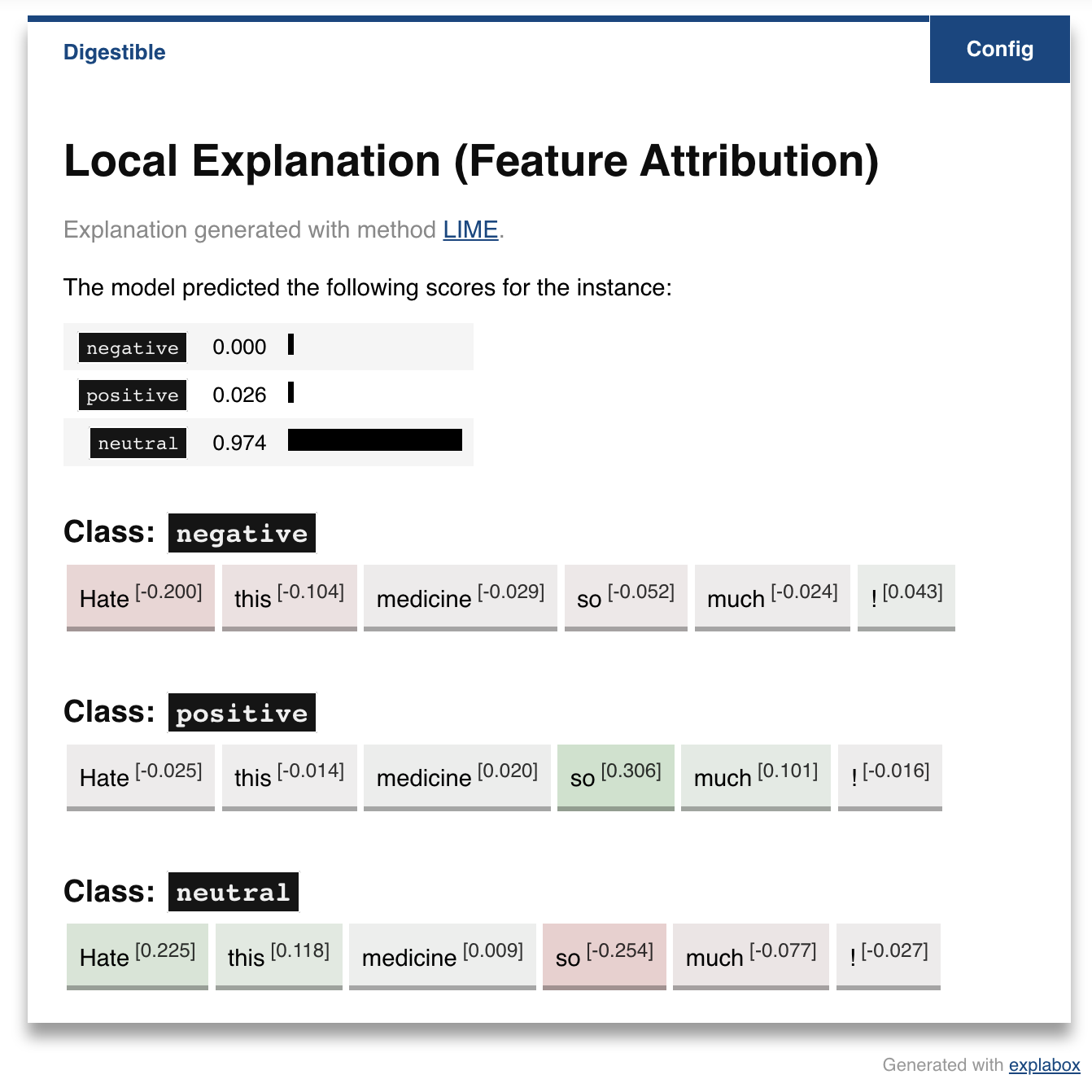

The framework turns opaque models and data (ingestibles) into interpretable reports and visualizations (digestibles) tailored for diverse stakeholders, from developers and auditors to legal and ethical oversight bodies. It aids in explaining, testing and documenting AI/ML models, developed in-house or acquired externally.

explabox operationalizes the audit process through its standardized four-step workflow:

Explore: describe aspects of the model and data.

Examine: calculate quantitative metrics on how the model performs.

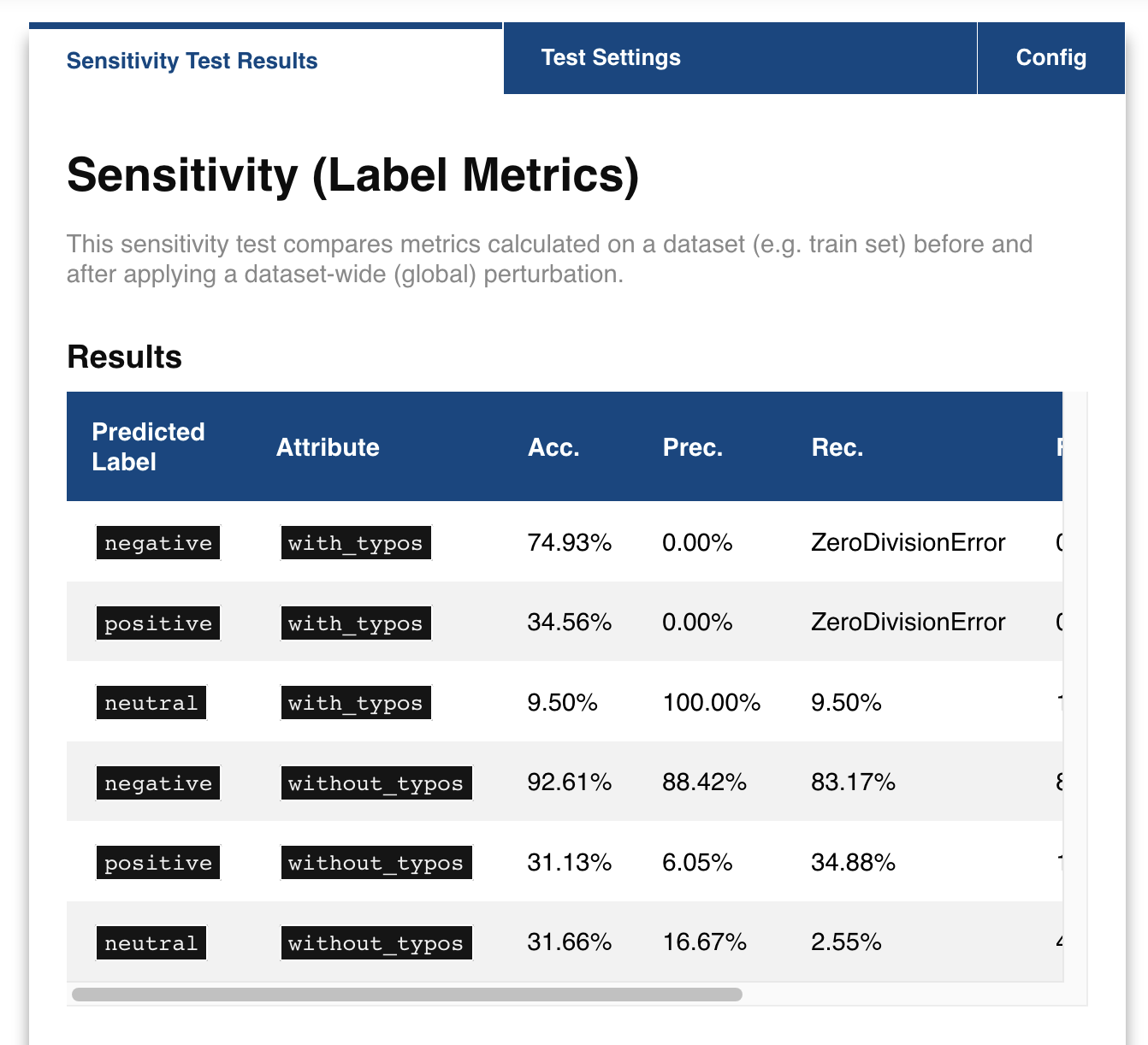

Expose: see model sensitivity to random inputs (safety), test model generalizability (e.g. sensitivity to typos; robustness), and see the effect of adjustments of attributes in the inputs (e.g. swapping male pronouns for female pronouns; fairness), for the dataset as a whole (global) as well as for individual instances (local).

Explain: use XAI methods for explaining the whole dataset (global), model behavior on the dataset (global), and specific predictions/decisions (local).

A number of analyses in the explabox can also be used to provide transparency and explanations to stakeholders, such as end-users or clients.

Note

The explabox currently only supports natural language text as a modality. In the future, we intend to extend to other modalities.

Important

The corresponding paper for the explabox, published in the Journal of Open Source Software (JOSS), can be accessed online at doi:10.21105/joss.08253.

Quick tour

The explabox is distributed on PyPI. To use the package with Python, install it (pip install explabox), import your data and model and wrap them in the Explabox. The example dataset and model shown here can be easily imported using demo package explabox-demo-drugreview.

First, import the pre-provided model, and import the data from the dataset_file. All we need to know is in which column(s) your data is, and where we can find the corresponding labels:

from explabox import import_data, import_model

data = import_data('./drugsCom.zip', data_cols='review', label_cols='rating')

model = import_model('model.onnx', label_map={0: 'negative', 1: 'neutral', 2: 'positive'})

Second, we provide the data and model to the Explabox, and it does the rest! Rename the splits from your file names for easy access:

from explabox import Explabox

box = Explabox(data=data,

model=model,

splits={'train': 'drugsComTrain.tsv', 'test': 'drugsComTest.tsv'})

Then .explore, .examine, .expose and .explain your model:

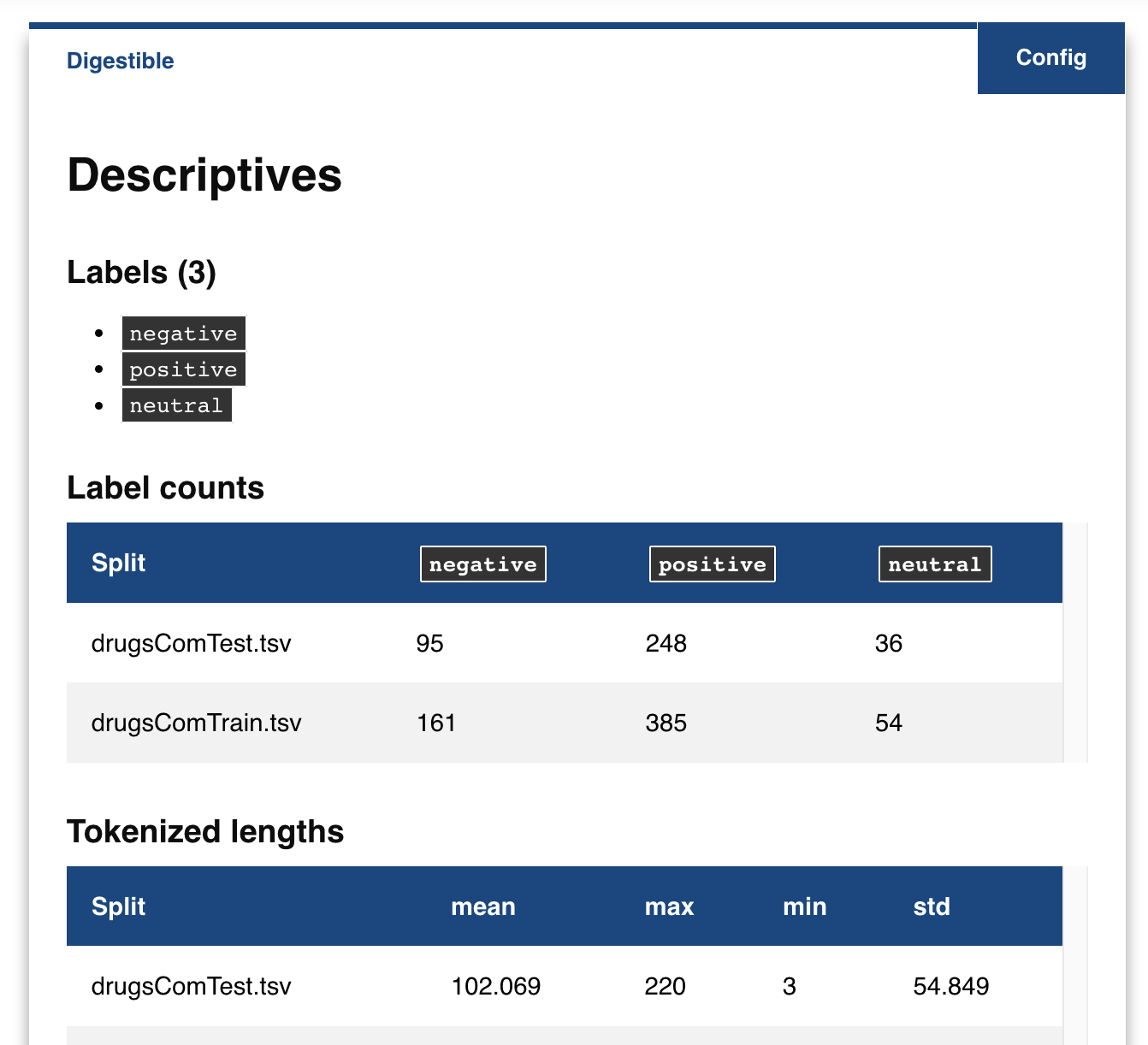

# Explore the descriptive statistics for each split

box.explore()

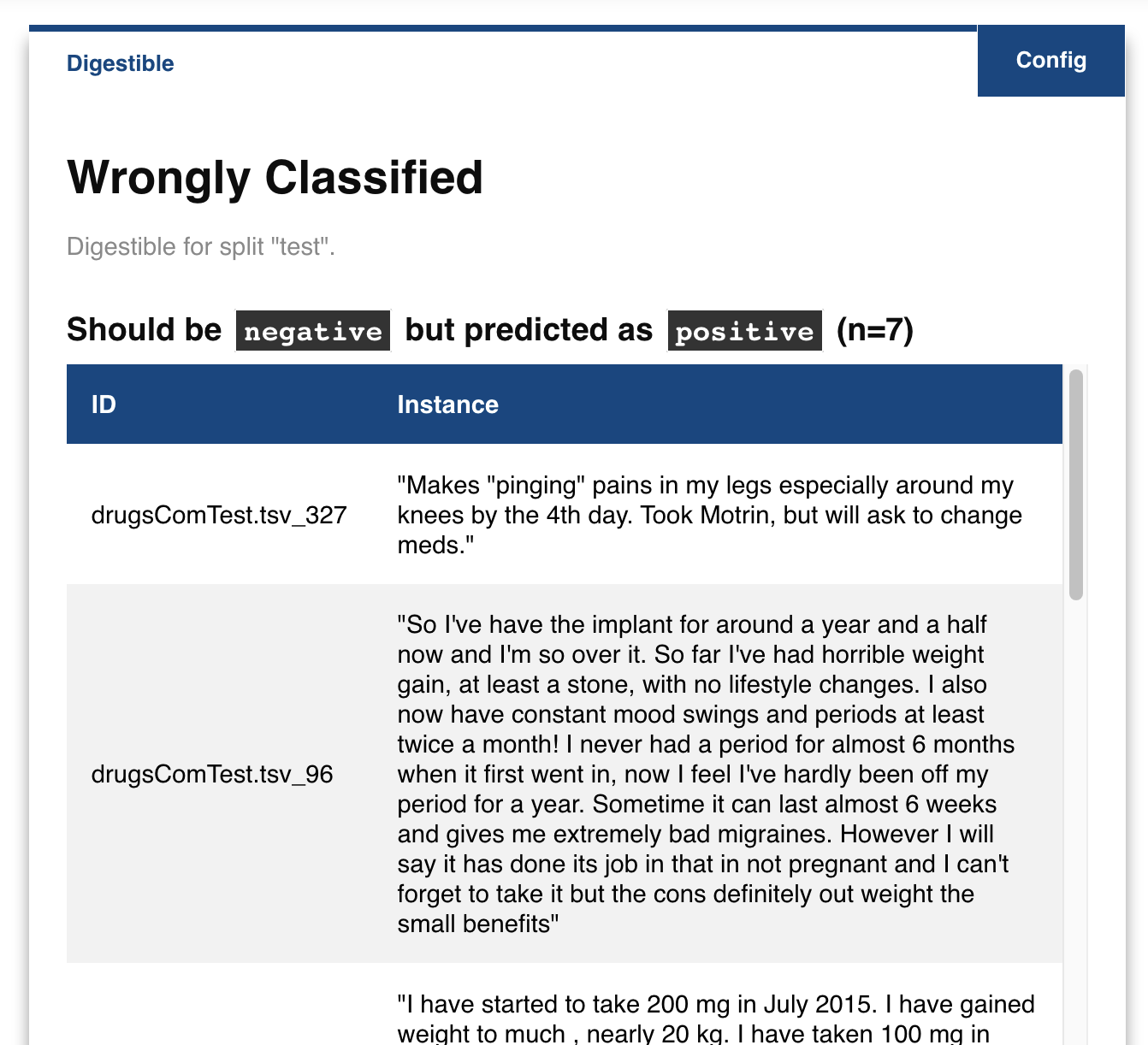

# Show wrongly classified instances

box.examine.wrongly_classified()

# Compare the performance on the test split before and after adding typos to the text

box.expose.compare_metric(split='test', perturbation='add_typos')

# Get a local explanation (uses LIME by default)

box.explain.explain_prediction('Hate this medicine so much!')

Using explabox

- Installation

Installation guide, directly installing it via pip or through the git.

- Example Usage

An extended usage example, showcasing how you can explore, examine, expose and explain your AI model.

- Overview

Overview of the general idea behind the explabox and its package structure.

- Explabox API reference

A reference to all classes and functions included in the explabox.

Development

- Explabox @ GIT

The git includes the open-source code and the most recent development version.

- Changelog

Changes for each version are recorded in the changelog.

- Contributing

A guide to making your own contributions to the open-source explabox package.

Citation

If you use the explabox in your work, please read the corresponding paper at doi:10.21105/joss.08253, and cite the paper as follows:

@article{Robeer2025,

title = {{Explabox: A Python Toolkit for Standardized Auditing and Explanation of Text Models}},

author = {Robeer, Marcel and Bron, Michiel and Herrewijnen, Elize and Hoeseni, Riwish and Bex, Floris},

doi = {10.21105/joss.08253},

journal = {Journal of Open Source Software},

year = {2025},

volume = {10},

number = {114},

pages = {8253},

}